layline.io Blog

Event-driven or bust!? Are you missing out if your business is not event-driven?

You may be missing out if you are *data-driven* only. In fact, every business is data-driven. But you should ask yourself what that really means, and whether it is sufficient for you tomorrow.

Reading time: 7 min.

What does it mean to be “data-driven” (technically)?

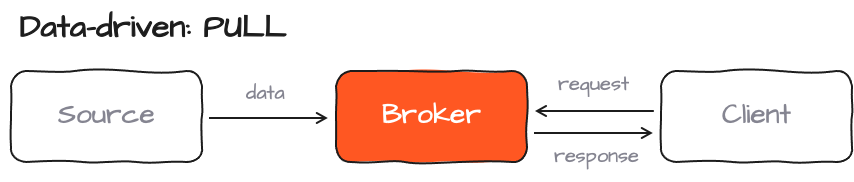

Data-driven means that data manifests somewhere in your business, and other systems - which may require this information for whatever purpose they fulfil - then poll the data from that source system. This could be by way of an ETL tool, a ready-made integration between receiving system and providing system, or simply some sort of custom-made polling interface.

Typical characteristics:

- Synchronous data exchange (request / response pattern)

- Trigger within minutes, hours, days, months

This is a very common architecture which can be found in basically all businesses. A typical example would be an ERP system which is then polled by an ETL system which in turn feeds a data warehouse. There are countless other “data-driven” examples of course.

Being data-driven is normal and for most companies the status-quo.

Are you missing something then? Is it … Digital Transformation?

Are you a business that has spent a truckload of money on consultants telling you that you should undergo “Digital Transformation” or bust? You’re not alone then. You don’t need this info, however. Unless you’re still working a mechanical cash register and keep a rolodex on your desk, you have already embarked on Digital Transformation a long time ago.

Digital Transformation is an evolutionary process. There is always an emergence of new technology and even newer technology which is just around the corner. Not all of this may be relevant to your business, but some technologies may be key to streamline your business further, create new offers and services, and improve on products and process. One of these trends in the recent past has been the notion of being “event-driven” and “real-time” .

Event-driven data processing

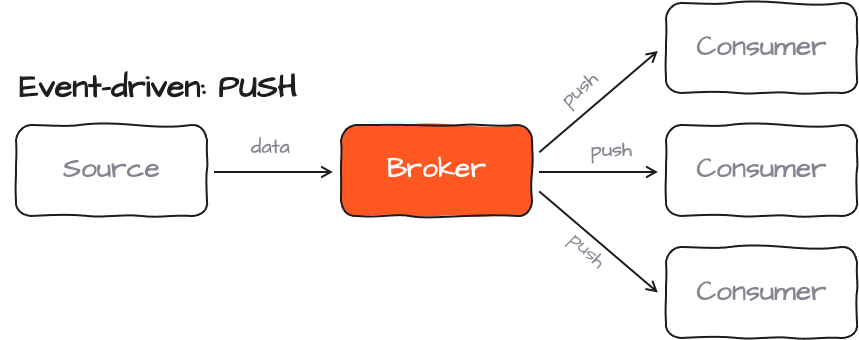

Being event-driven means to act on business events in real-time or near-real-time. One of the main characteristics, is that you get a constant stream of business “events” which are being pushed from the generating business infrastructure in real-time. Consumers of this data subscribe to the source and ingest the data. Data receipt ist not acknowledged back to the source.

Typical characteristics:

- Asynchronous data-exchange (react on events without reply)

- Reaction within 0 to 3 seconds (roughly … should be milliseconds at best)

As you can see in this short comparison, there is a fundamental technical and architectural difference between data-driven and even-driven data consumption. It’s fair to ask what is the point and where the value of this different type of architecture lies for your business. Let’s look at this in a more general fashion:

Understanding the Event-Lifetime-Value (ELV)

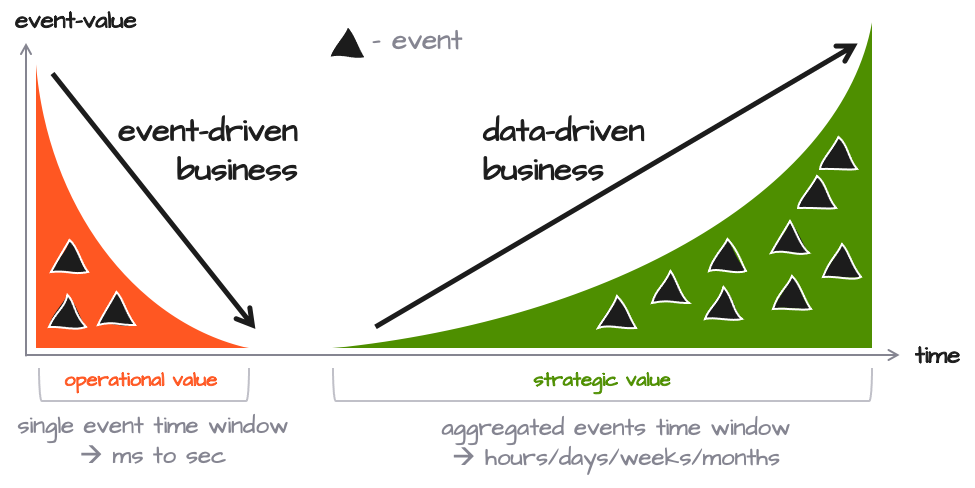

Based on the technical explanation above, you may wonder what this means for business and how it affects it. From a business-standpoint the real difference between event-driven and data-driven is the time-factor and how time can be monetized.

To distinguish the two you can argue that events which are being processed in a data-driven fashion (minutes/days/weeks/months) bear a strategic value which can actually grow over time (green wedge). Events which are processed in real-time on the other hand have an operational value which decreases rapidly as the data ages within seconds (orange wedge).

In other words: The faster (real-time) your reaction to the information, the higher the potential operational value. In contrary, the more data you gather, the higher the strategic value of the aggregate may be. Stock prices are a perfect example here in that lightning fast information about price movements bears a very high potential to make the better trade before others do (speed of information). In the longer run, time-series data about specific stock price movement helps to gain better insights about trends and correlations of a particular stock.

Of course, this is a simplified view as both event-driven and data-driven timing may overlap. It all depends on your business use-cases as well as how you can take advantage of real-time information.

A key point here is that up to now many businesses do not use real-time data at all. For them this may be an entirely new value which can be transformed into a valued-add.

So how do you know the actual event-lifetime-value (ELV) in your case then? It’s really hard to measure since there is no “gauge” which reads out the ELV. You have to make your own estimates based on your use-case. It’s always an approximation of some sort. The more you know about the connection between data and (perceived) revenue stemming from it, the better the approximation will be.

Alas, should you care about event-driven data processing?

There is no simple answer to this. It depends on your business model, and whether there is any identifiable benefit had you a lot more data available in real-time. It would be very neglectful to not fully understand what you may be missing out on, however. It’s here where it makes sense to take a good look around and see where markets and technology are headed, how others are benefiting from it and what it means to you.

Relevant drivers spawning mega market growth

Digital Transformation is truly transforming the world. This is about the integration of intelligent data into everything that we do. It is about an event- and data-driven world which is always-on, always tracking, always learning and reacting to these findings.

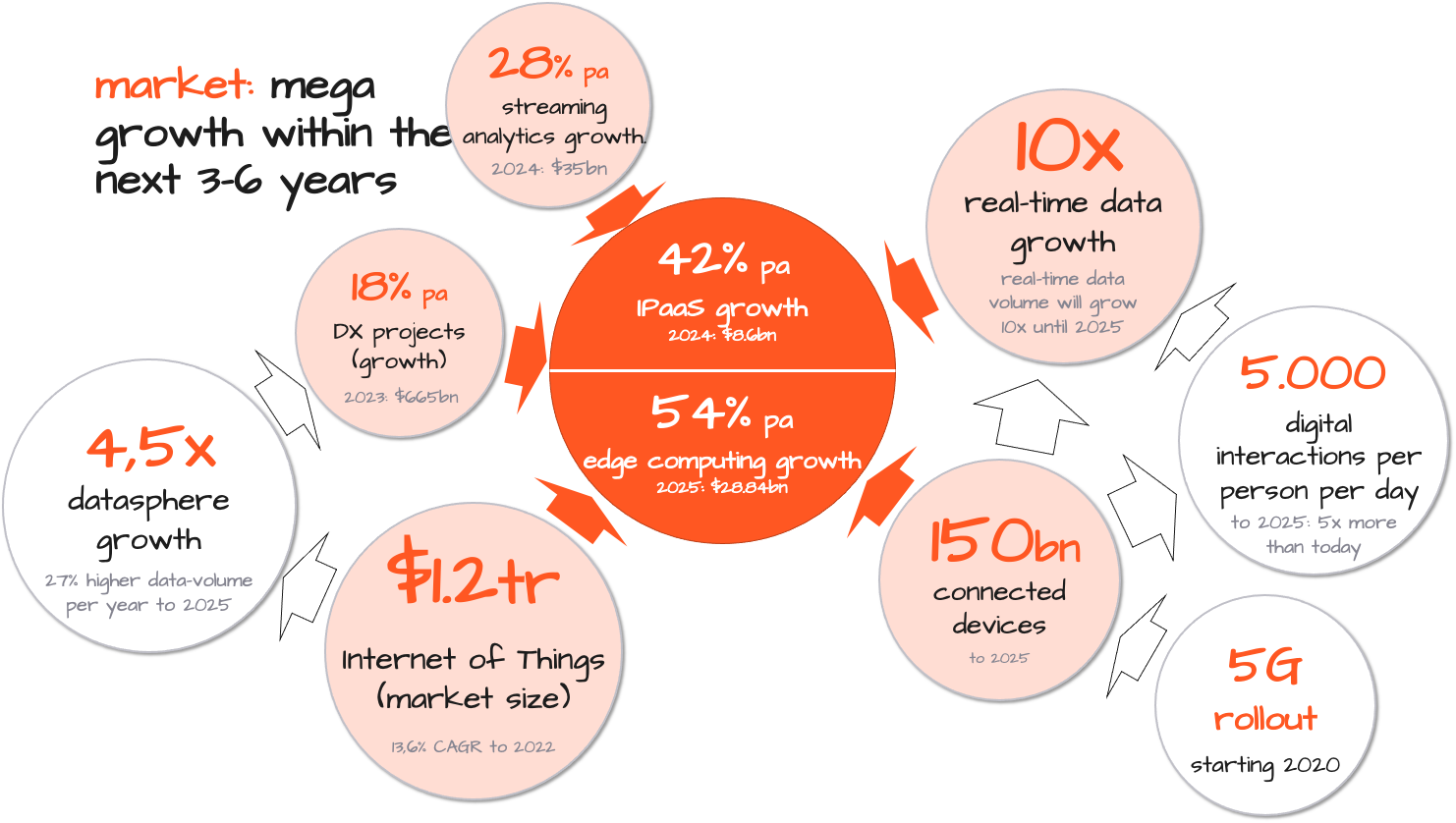

Of course, it is data which is the lifeblood of Digital Transformation, and without it not one of the changes in this arena we encounter today and tomorrow would happen. As people, industries and things get more and more connected, more data is available. In turn more value can be harvested from it and new services can be created. This momentum propels data generation and consumption into ever more heights, and it is predicted, that global data generation will grow 28% YoY over the next five years (source: datanami). This growth and the requirements of Digital Transformation put an astronomical strain on organizations. If they haven’t already done so, they need to not only need rethink their business strategy, but also how to deliver on it technically.

There is no doubt we are looking at literal explosion of data volume spawned by technical advancements, which in turn feed new fires over the next three to six years. 5G has started to roll out, allowing for a huge number of new use cases due to higher bandwidth and extremely low latency. It is estimated that by 2025 we will have 150 billion connected devices and that up to 5,000 digital interactions per day will be attributable to each person. With this grows the datasphere - and especially real-time data volume - is posed to grow up to ten times within the next few years.

What is your answer?

We understand that legacy systems are not the answer. Some simple, but serious problems are that they are just not designed for these data masses, are usually not cloud enabled, do not scale, and are the opposite of agile. Getting to grips with this is life-threatening to many, and a real competitive problem for most.

One of the main challenges in this setting is how to integrate with all the data sources and sinks quickly, make sense of the information, and ensure that data is handled with low latency and at truly massive scale.

For this purpose layline.io has taken a new approach and put together a brand-new way of solving this by introducing its Reactive Data Integration solution.

It connects to virtually anything and can interpret, process, forward and interact with everything on all levels and at the speed of data. Individually configurable workflows fulfil individual tasks. By way of its architecture, tens, hundreds, even thousands of layline.io Reactive Engines can automatically be deployed natively or as microservices (via docker & kubernetes) across an array of nodes. Forming an actual Reactive Data Integration Mesh. These nodes can range from very small devices on the edge up to large scale installations in the core data center (on-premise or cloud). Because the engines on the nodes are aware of each other, they can also look out for each other. Load deviations and failures are automatically balanced and neutralized.

In short: It’s a non-stop, immortal, transparent data network which scales in three dimensions:

- From core to edge

- Across nodes

- Even inside a node,

and allows business to quickly configure the logic they need when and where they need it, abstracting the underlying infrastructure almost completely.

Resources

- Read more about layline.io here.

- Contact us at hello@layline.io.

Previous

Fixing what’s wrong with Microservices

Next

Advantage of layline.io Workflows compared to traditional Microservices within layline.io using the K8S/Docker model